Conditional flow matching

This post explores the generalization of Conditional Flow Matching (CFM), a simulation-free objective for training generative models (Lipman et al., 2022). Notably, CFM removes the requirement for a Gaussian source distribution.

1. Introduction

Starting with a source distribution $q_0$, we define a time-dependent map $\phi: [0,1] \times \mathbb{R}^d \to \mathbb{R}^d$. This flow map is induced by a vector field $u:[0,1] \times \mathbb{R}^d\to \mathbb{R}^d$ through the following ordinary differential equation (ODE):

\[\begin{align*} \frac{d}{dt} \phi_t(x) &= u_t(\phi_t(x)) \,, \\ \phi_0(x) &= x \,. \end{align*}\]A vector field $u_t$ is said to generate a probability density path $p_t$ if the corresponding flow map $\phi_t$ pushes forward the initial distribution such that

\[p_t = [\phi_t]_\#p_0 \,.\]The flow map $\phi_t$ defines a pushforward $p_t$, representing the evolution of the density of samples $x \sim p_0$ as they are transported from initial state to time $t$. We can verify that the vector field $u_t$ generates this probability path $p_t$ by ensuring the continuity equation:

\[\frac{\partial p_t}{\partial t} + \text{div}(p_tu_t) = 0 \,,\]where $\text{div}(.)$ indicates the divergence operator, which is defined as $\text{div}(F)(x) = \sum_i \frac{\partial F(x)_i}{ \partial x_i}$.

The goal of Conditional Flow Matching (CFM) is to learn a neural vector field $u_\theta(x, t)$ such that its integration transforms a source distribution $q_0(x)$ into a target distribution $q_1(x)$. Once the velocity field is estimated, we can generate samples by following iits trajectories to transport data from $q_0$ to $q_1$. Next we describe how to learn this neural vector field.

2. Flow matching

While (Chen et al., 2018) proposed a direct method via differentiable ODE solvers, it is often computationally prohibitive. A more efficient alternative is to regress a vector field that induces a probability path between $p_0$ and $p_1$. However, there are infinite number of probability paths $p_t$ (equivalently infinite number of velocity fields $u(x, t)$)

Given a target velocity field, it leads to the flow matching objective

\[\mathcal{L}_\text{FM} = \mathbb{E}_{t, x\sim p_t(x)}[ \| u_t(x) - u_\theta(x, t) \|^2] \,.\]3. Conditional flow matching

To implement conditional flow matching, we only require access to the conditioning distribution, the conditional probability path and its corresponding conditional vector field. We will discuss several design choices.

3.1. Vector fields generating marginal probability paths

We start by specifying a probability path $p_t$. Suppose that the marginal probability path $p_t(x)$ is a mixture of conditional probability paths with some conditioning variable $z$,

\[p_t(x) = \int p_t(x|z) q(z) dz \,,\]where $q(z)$ is some distribution over the conditioning variable. It is important to satisfy the boundary condition, i.e., $\mathbb{E}_z [p_0(x \vert z)] = q_0 (x)$ and $\mathbb{E}_z[p_1(x\vert z)] = q_1(x)$. Therefore, we have the following results.

If the conditional probability path $p_t(x\vert z)$ is generated by the conditional vector field $u_t(x\vert z)$ from initial condition $p_0(x\vert z)$, then the vector field

\[u_t(x) = \int u_t(x|z) \frac{ p_t(x|z) q(z)}{p_t(x)} dz\]generates the probability path $p_t(x)$.

The CFM objective is defined as:

\[\mathcal{L}_\text{CFM} = \mathbb{E}_{t, z\sim q(z), x\sim p_t(x|z)}[ \| u_t(x|z) - u_\theta(x, t) \|^2]\]Importantly, the gradients of FM and CFM w.r.t. the model parameters $\theta$ are identical. Thus, this allows us to regress against the simpler conditional vector field.

When using Gaussian conditional probability paths of the form

\[\begin{align} p_t(x|z) &= \mathcal{N}(x|\mu_t(z), \sigma_t(z)^2I) \,, \label{eq:cond} \end{align}\]where $\mu: [0,1] \times \mathbb{R}^d \to \mathbb{R}^d$ denotes the time-dependent mean of the Gaussian distribution, while $\sigma: [0,1] \times \mathbb{R}^d \to \mathbb{R}_{>0}$ describes a time-dependent scalar standard deviation. We have an important result

The conditional vector field that generates conditional probability path in Equation \eqref{eq:cond} has the form of

\[u_t(x|z) = \frac{\dot{\sigma}_t(z)}{\sigma_t(z)} (x - \mu_t(z)) + \dot{\mu}_t(z) \,.\]

3.2. Sources of conditional probability paths

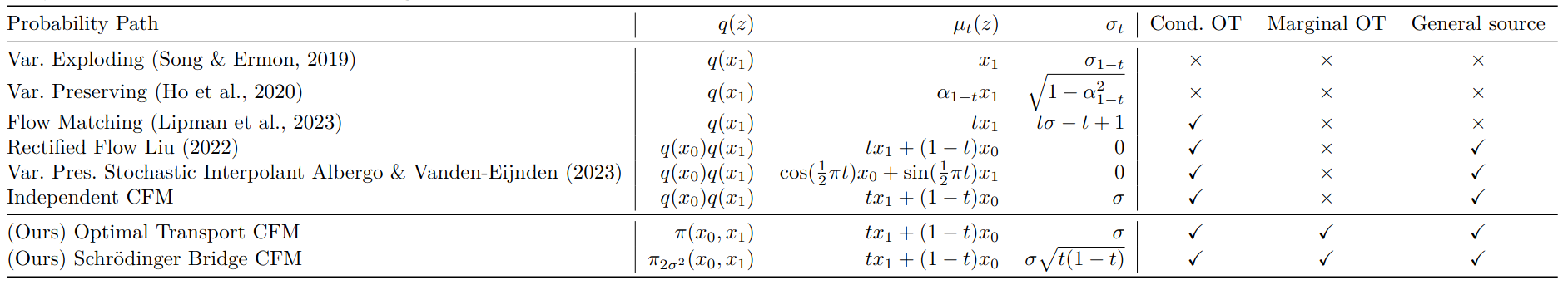

There are several ways of choosing $q(z)$, $p_t(. \vert z)$, and $u_t(. \vert z)$. Table 1 shows the probability paths of existing methods (Tong et al., 2023). In the following, we discuss some variants from prior work.

FM from Gaussian

By setting the condition $z=x_1$ and using a constant $\sigma >0$, (Lipman et al., 2022) define the Gaussian conditional path as:

\[p_t(x|z) = \mathcal{N}(x | tx_1, (t\sigma - t - 1)^2 I) \,.\]This results in the conditional vector field:

\[u_t(x|z) = \frac{x_1 - (1-\sigma)x}{1 - (1-\sigma)t} \,.\]In this framework, while each individual conditional path $p_t(x\vert z)$ follows an optimal transport (OT) trajectory from $p_0(x\vert z)$ to $p_1(x \vert z)$, the marginal path $p_t(x)$ is not in general an OT path from $p_0(x)$ to $p_1(x)$.

CFM with independent coupling

Using an independent coupling $q(z) = q(x_0)q(x_1)$, we define the conditional probability path as a Gaussian centered on a linear interpolation:

\[p_t(x|z) = \mathcal{N}(x|tx_1 + (1-t)x_0, \sigma^2I) \,.\]This leads to the conditional vector field:

\[u_t(x|z) = x_1 - x_0 \,.\]Notably, the source $q_0(x)$ can be any arbitrary distribution, even with intractable densities. There is no requirement for $q_0(x)$ to be Gaussian. This independent CFM (I-CFM) is closely related to Rectified Flow (Liu, 2022) and Stochastic Interpolant (Albergo & Vanden-Eijnden, 2022). Why I-CFM is capable of translating between any two arbitrary distributions, using it for unpaired translation typically results in content preservation issues. This is because I-CFM ignores the geometric structure of the data and fails to minimize the total displacement cost.

Optimal transport CFM

(Tong et al., 2023) demonstrated that the coupling $q(z)$ can be generalized so that $x_0$ and $x_1$ don’t have to be independent. The core CFM properties remain valid as long as $q(z)$ maintains the correct marginals $q(x_0)$ and $q(x_1)$. As a result, they propose $q(z)$ to be the 2-Wasserstein optimal transport plan $\pi$ such that $q(z) = \pi (x_0, x_1)$. Instead of sampling $x_0$ and $x_1$ independently, they are jointly sampled according to the optimal transport plan $\pi$. In practice, finding the global OT plan is computationally expensive for large datasets. We can instead compute a local OT coupling within each training minimatch.

Schrödinger bridge CFM

To improve the robustness of transport maps against noise and uncertainty in high-dimensional spaces, we can extend OT-CFM to Schrödinger bridge CFM (SB-CFM). We begin by defining the conditioning distribution as

\[q(z) = \pi_{2\sigma^2}(x_0, x_1) \,,\]where $\pi_{2\sigma^2}$ represents the solution to the entropy-regularized OT problem using the cost $\Vert x_0 - x_1 \Vert$ and an entropy regularization $2\sigma^2$. The conditional probability path and vector field are defined as

\[\begin{align*} p_t (x | z) &= \mathcal{N}(x | tx_1 + (1-t)x_0, t(1-t)\sigma^2 I) \\ u_t(x| z) &= \frac{1-2t}{2t(1-t)} (x - (tx_1 + (1-t)x_0)) + (x_1 - x_0) \,. \end{align*}\]By construction, the marginal vector field $u_t$ generates the same probability path as the solution fo the SB problem.

3.3. Log-likelihood computation

The relationship between the probability and the vector field can be described with instantaneous change in log-probability (Chen et al., 2018)

\[\begin{align} \frac{\partial}{\partial t} \log p_t(x) = -\text{tr}\left( \frac{\partial}{\partial x} u_\theta(x_t, t) \right) \,. \label{eq:instance} \end{align}\]We can obtain the log-likelihood of the trajectory via integrating \eqref{eq:instance} across time

\[\log p_t(x) = \log p_\tau(x) - \int_{\tau}^t \text{tr}\left( \frac{\partial}{\partial x} u_\theta(x_s, s) \right) ds\,,\]where $0 \le \tau < t \le 1$. In practice, we employ Hutchinson’s Trace Estimator, which uses a random vector $\epsilon$ to estimate the trace as $\epsilon^\top (\nabla_x u_\theta(., t)) \epsilon$.

3.4. Connection to diffusion models

We consider generative models that map samples $x_0$ from a Gaussian $q_0(x)$ to a data distribution $q_1(x)$. We define the interpolation between $x_0$ and $x_1$ as

\[x_t = \alpha_t x_1 + \beta_t x_0 \,.\]The following result gives a connection with the score function (Zhang et al., 2024)

Let $\lambda_t = \alpha_t / \beta_t$ denote the signal-to-noise ratio. The relationship between the score function $\nabla_x \log p_t(x)$ and the vector field $u_\theta(x,t)$ is given by

\[\nabla_x \log p_t(x_t) = \frac{1}{\beta_t^2} \left[ \left( \frac{d \log \lambda_t}{dt} \right)^{-1} \left( u_\theta(x_t, t) - \frac{d \log \beta_t}{dt}x_t \right) -x_t \right] \,.\]

When $\alpha_t=t$ and $\beta_t=1-t$, the score function simplifies to

\[\nabla_x \log p_t(x_t) = \frac{1}{1 - t} (-x_t + tu_\theta(x_t, t)) \,.\]Conclusion

In this post, we have explored how Conditional Flow Matching (CFM) provides a simulation-free framework for training generative models. CFM bypasses the high computational costs of traditional neural ODEs and offers flexibility than diffusion models, moving beyond the requirement for Gaussian source distributions.

References

- Lipman, Y., Chen, R. T. Q., Ben-Hamu, H., Nickel, M., & Le, M. (2022). Flow matching for generative modeling. ArXiv Preprint ArXiv:2210.02747.

- Chen, R. T. Q., Rubanova, Y., Bettencourt, J., & Duvenaud, D. K. (2018). Neural ordinary differential equations. Advances in Neural Information Processing Systems, 31.

- Tong, A., Fatras, K., Malkin, N., Huguet, G., Zhang, Y., Rector-Brooks, J., Wolf, G., & Bengio, Y. (2023). Improving and generalizing flow-based generative models with minibatch optimal transport. ArXiv Preprint ArXiv:2302.00482.

- Liu, Q. (2022). Rectified flow: A marginal preserving approach to optimal transport. ArXiv Preprint ArXiv:2209.14577.

- Albergo, M. S., & Vanden-Eijnden, E. (2022). Building normalizing flows with stochastic interpolants. ArXiv Preprint ArXiv:2209.15571.

- Zhang, Y., Yu, P., Zhu, Y., Chang, Y., Gao, F., Wu, Y. N., & Leong, O. (2024). Flow priors for linear inverse problems via iterative corrupted trajectory matching. Advances in Neural Information Processing Systems, 37, 57389–57417.